Bandwidth vs Latency: What’s the Difference?

Quick Answer

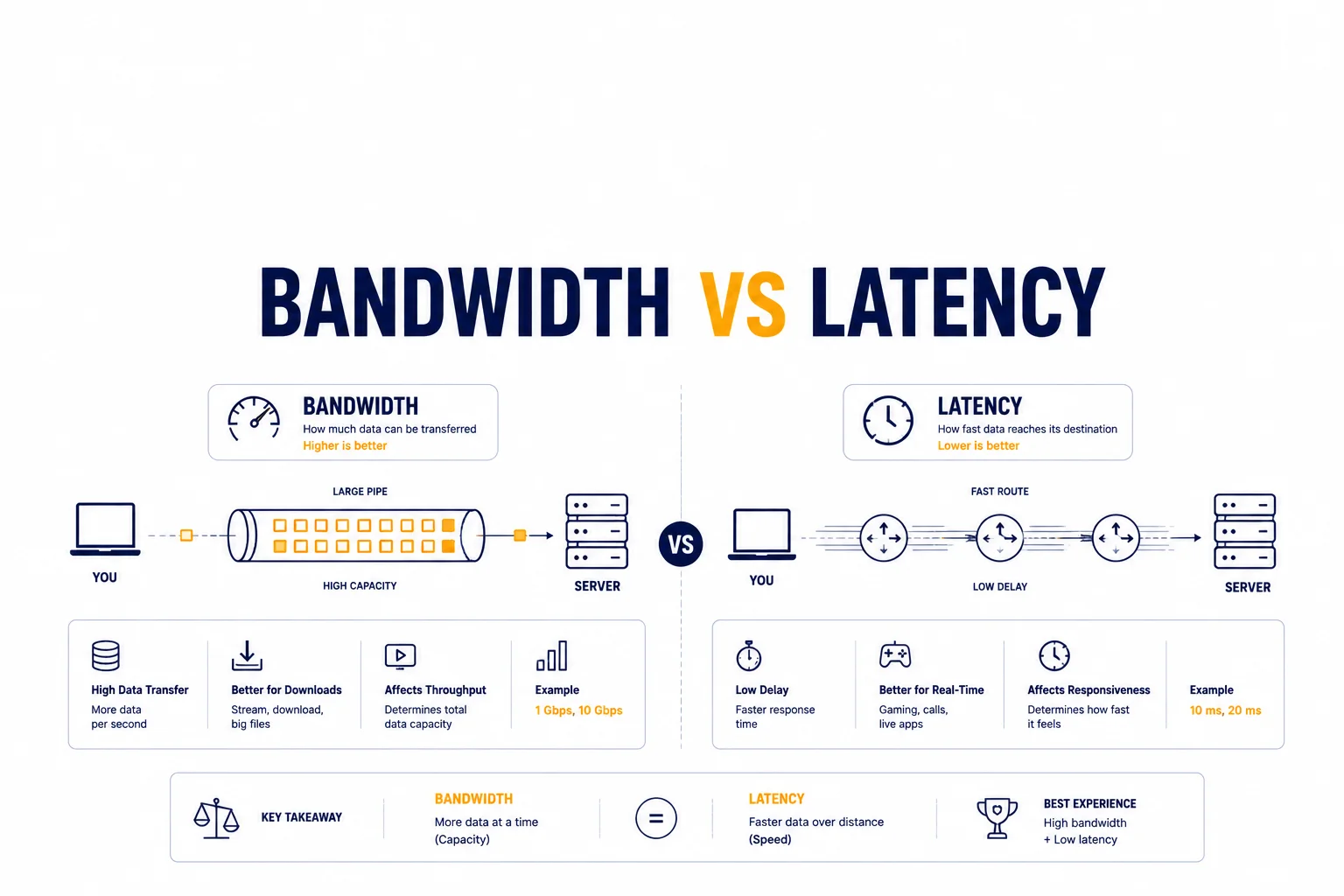

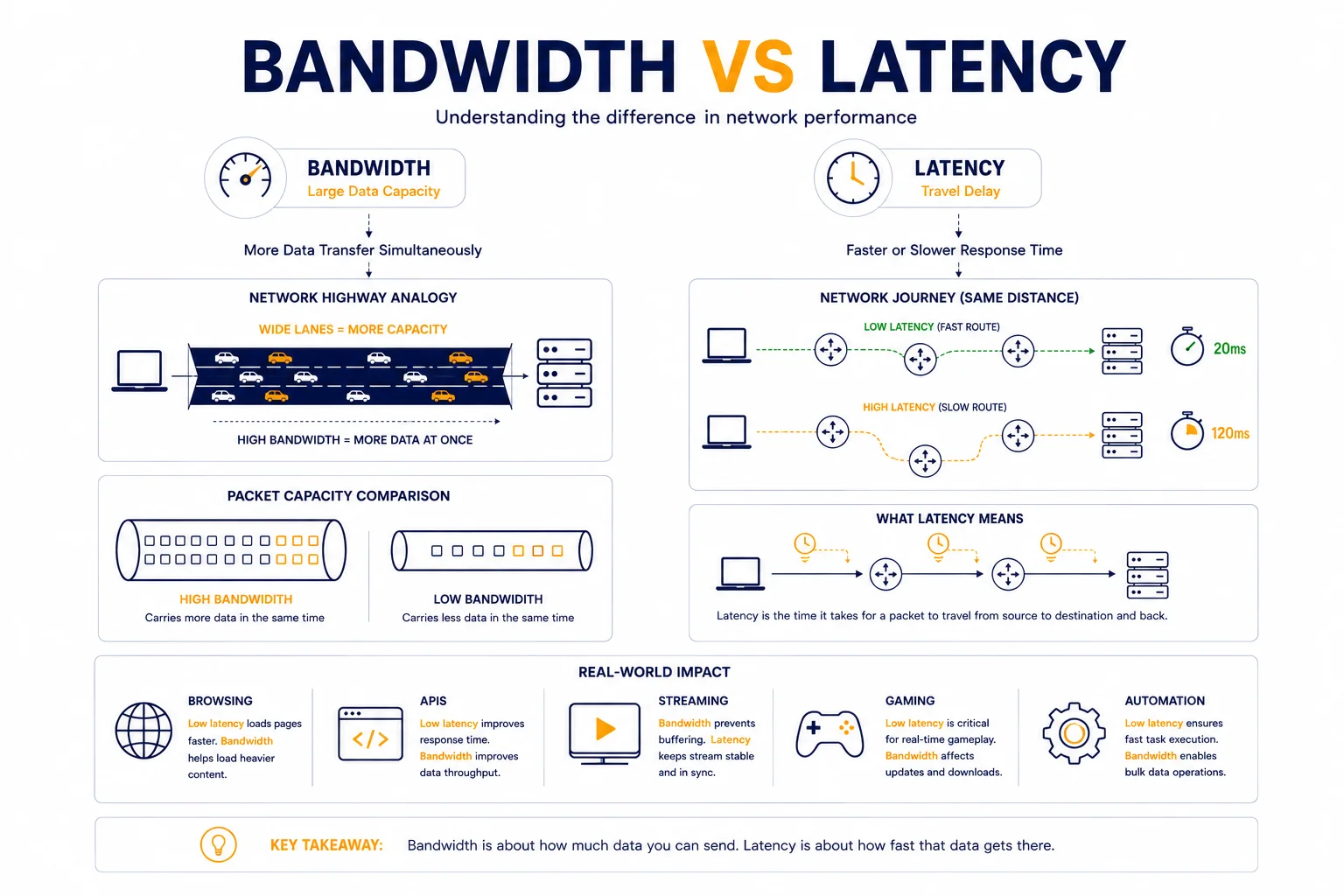

Bandwidth measures how much data can be transferred, while latency measures how quickly data travels across the network. High bandwidth does not always mean fast or responsive connections if latency remains unstable.

Key Takeaways

- Bandwidth and latency measure different aspects of network performance

- High bandwidth does not guarantee low latency

- Latency affects responsiveness and real-time communication

- Bandwidth affects download and transfer capacity

- Stable routing is often more important than raw speed

Why People Often Confuse Bandwidth and Latency

Many users assume that a “fast internet connection” automatically means:

- low delay

- smooth browsing

- responsive applications

In reality, bandwidth and latency solve different problems.

A connection may have:

- extremely high bandwidth

- but still feel slow because of unstable latency

This is especially noticeable in:

- gaming

- APIs

- proxies

- automation systems

- video calls

What Bandwidth Actually Means

Bandwidth describes how much data can move through a connection during a certain amount of time.

It is usually measured in:

- Mbps

- Gbps

Higher bandwidth allows larger amounts of traffic to transfer simultaneously.

Examples:

- downloading large files

- streaming 4K video

- transferring backups

- cloud synchronization

Bandwidth is essentially network capacity.

What Latency Actually Means

Latency measures delay.

More specifically, it measures how long data takes to travel between systems.

Latency is usually measured in milliseconds (ms).

Low latency means:

- requests arrive quickly

- responses feel immediate

- communication remains responsive

High latency creates:

- lag

- delayed responses

- unstable interactions

For deeper explanation, see Proxy Latency Explained.

Simple Real-World Analogy

Imagine a highway.

Bandwidth is:

👉 how many cars the highway can handle at once.

Latency is:

👉 how long it takes one car to reach the destination.

A huge highway with traffic jams may still feel slow.

Similarly, a high-bandwidth connection with unstable latency can perform poorly.

Why Low Latency Often Matters More

In real infrastructure, many systems send small requests constantly rather than large files.

Examples include:

- websites

- APIs

- automation tools

- proxy traffic

- cloud services

These workloads depend heavily on responsiveness.

Even moderate latency increases may create:

- delayed requests

- timeout errors

- unstable sessions

High Bandwidth Does Not Fix Bad Latency

One common misconception:

👉 “If bandwidth is high enough, everything should be fast.”

This is not true.

For example:

| Connection | Bandwidth | Latency |

| Connection A | 1 Gbps | 350 ms |

| Connection B | 100 Mbps | 20 ms |

For browsing, APIs, and automation, Connection B may feel dramatically faster despite lower bandwidth.

Why Latency Matters for Proxies

Proxy systems are especially sensitive to latency because traffic travels through additional routing layers.

Typical flow:

Client → Proxy → Website → Proxy → Client

Each extra hop increases:

- travel time

- routing complexity

- response delay

This is why low latency proxy infrastructure often performs better than simply “high speed” proxies.

For related context, see Low Latency Proxies: How to Choose the Fastest Proxy Network.

Why Bandwidth Still Matters

Bandwidth remains extremely important for:

- large downloads

- streaming

- media delivery

- cloud storage

- CDN infrastructure

If bandwidth becomes too limited:

- congestion increases

- queues form

- packet loss may appear

This is why healthy networks require both:

- sufficient bandwidth

- stable latency

How Congestion Affects Both Metrics

Congestion is one of the few problems that impacts both bandwidth and latency simultaneously.

When infrastructure becomes overloaded:

- traffic queues increase

- routers begin dropping packets

- response delays grow

This often creates:

- buffering

- unstable loading

- failed requests

For related context, see What Is Packet Loss and Why It Happens.

Why Stable Routing Is Critical

Even high-quality infrastructure can perform poorly if routing paths become unstable.

Routing problems may cause:

- fluctuating latency

- retransmissions

- inconsistent response times

This is why engineers often analyze network paths rather than only speed tests.

You can analyze routes using IP Trace Tool.

Latency vs Bandwidth in Different Workloads

Different applications prioritize different metrics.

| Workload | More Important Metric |

| Gaming | latency |

| Video streaming | bandwidth |

| APIs | latency |

| Web browsing | latency |

| Large downloads | bandwidth |

| Automation systems | latency |

| Cloud backups | bandwidth |

This is why there is no universal “perfect internet speed”.

Why Packet Loss Makes Everything Worse

Packet loss often amplifies both latency and bandwidth problems.

When packets disappear:

- retransmissions increase

- requests slow down

- congestion grows

This may create situations where:

- bandwidth appears acceptable

- but the connection still feels unstable

How Engineers Measure Network Quality

Professional infrastructure monitoring rarely relies on Mbps alone.

Instead, engineers monitor:

- latency consistency

- packet loss

- jitter

- route stability

- response variance

This provides a more realistic view of actual network behavior.

Real Infrastructure Example

Imagine two cloud servers.

Server A:

- 10 Gbps connection

- unstable routing

- 250 ms latency spikes

Server B:

- 1 Gbps connection

- stable 20 ms latency

For APIs, websites, and automation, Server B may deliver a significantly better user experience.

Why AI Systems and Automation Prefer Stable Latency

Modern automation systems often depend on predictable timing.

Unstable latency may cause:

- failed sessions

- detection anomalies

- inconsistent request timing

- retry storms

This is why stable routing becomes critical in large-scale infrastructure environments.

Additional Tools for Network Diagnostics

Understanding bandwidth and latency together usually requires multiple diagnostics.

Useful tools include:

• Proxy Checker – tests response behavior and connectivity

• IP Lookup – identifies ASN and network ownership

• IP Trace Tool – analyzes routing paths and latency consistency

Combining these diagnostics gives a more complete picture of network quality.

Glossary

Bandwidth

The amount of data transferable through a network connection.

Latency

The delay required for data to travel across a network.

Packet Loss

Packets that disappear during transmission.

Routing

The process of directing traffic through network paths.

Frequently asked questions

Here we answered the most frequently asked questions.

What is the main difference between bandwidth and latency?

Bandwidth measures capacity, while latency measures delay.

Why can a high-speed connection still feel slow?

Because high latency or unstable routing may delay communication.

Which matters more for gaming?

Latency usually matters more than bandwidth.

Why are proxies sensitive to latency?

Because proxy traffic passes through additional routing layers, increasing travel time.